Optical Parameters for Machine Vision Applications, Part 2

4 MIN READ 02 December 2025By Allan Anderson

Optical Parameters for Machine Vision Applications, Pt 2 |

Lenses Explained

In part one of this blog, we introduced machine vision optics, and covered a few of the machine vision lens fundamentals: Field of View, Working Distance (WD) and Sensor Resolution. In this second part we will be continuing to run through some more of these optical parameters, examining how lenses work, and guiding you through choosing the right optics for your machine vision application.

Image Quality depends on several key variables: Field of View, Working Distance, Resolution, Depth of Field, Sensor Size, Contrast, Distortion, and Perspective.

Each of these variables can be seen as levers that affect the overall image quality of your machine vision system, and each will need to be pushed or pulled depending on your machine vision application in order to choose the right components for your system.

Why are Lenses Important?

Due to decreasing pixel size in today’s high-end machine vision cameras, the onus is becoming more and more on making the right choice of lens in your vision system early in the process. As sensor resolution gets better and better, lens resolution is now more likely to be the limiting factor for maximum obtainable resolution in machine vision systems. By understanding the key parameters involved, system integrators will be well-equipped to pick the right lens for their application.

Lens Resolution

As discussed in the last blog, the precise measurement of a machine vision system's ability to reproduce object detail is called resolution.

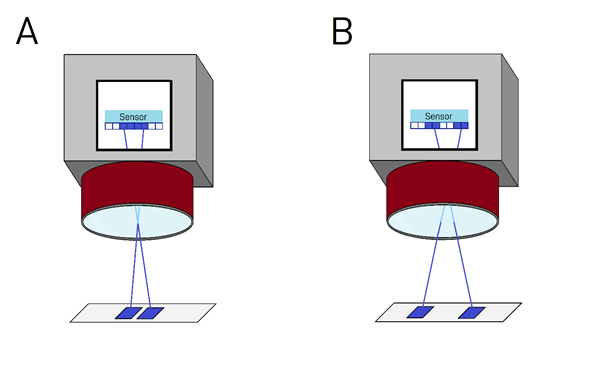

In the above diagrams, we have an example of a machine vision system that is being held back by the lens. The machine vision camera is perceiving an object with two areas of interest, separated by some tangible distance.

In diagram A, the areas of interest are very close together. While the sensor has a high enough resolution to detect the gap between these two areas, the lens is not sharp enough to resolve this difference, and so the image that ends up hitting the sensor fails to resolve the level of detail required for this application.

In B, the areas of interest are further apart, and so this time the lens is able to succeed at resolving the detail of the distance between the areas. The key conclusion here is that application A would require a sharper lens to get successful results, whereas this lens is fine for the lower level of detail required in application B.

Now let us look at the same applications with a sharper lens.

As the lens in diagrams C and D has a better resolution, the system’s ability to resolve finer details has improved. By looking at the pixels on the sensor in C, we can see that the fine distance between the two areas of interest can now be resolved, providing successful results for this machine vision application.

In D, the disparity between blue pixels has increased, providing a higher level of detail than in B. Again, it is worth thinking about whether you will need such a high level of detail for your application, as sharper lenses come at a higher cost.

The conclusion of these examples is that in order to get the most out of your camera’s sensor, the lens will need to have the same resolution rating or better to obtain that resolution for your system.

Contrast

Contrast in machine vision optics refers to the system’s ability to differentiate between white and black pixels at a given resolution in a vision system. For a machine vision system to perform optimally, black areas on the subject should show up as black on the image and white should show up as white.

As black and white pixels converge toward neighbouring shades of grey, the lower the contrast will be at that given frequency.

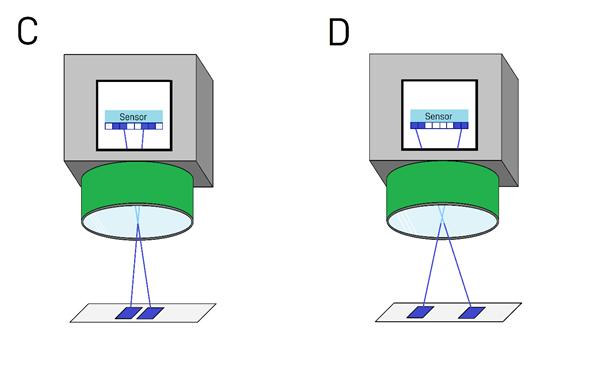

Machinery Images: Picture A has low contrast; Picture B has high contrast.

As you can see from the above, the right-hand image has a far greater disparity in strength between light and dark lines, meaning better contrast.

Your choice of lens, sensor and illumination will impact and determine the level of contrast obtained by your system, so think carefully about your application and the amount of contrast you will need your image to have.

Ultimately, as with just about every other parameter discussed thus far, the level of contrast required will depend on what your application calls for. The more visually subtle details that exist on your target object, the more contrast you will need in your system to pick them out.

Depth of Field (DOF)

Within every machine vision system’s field of view, there will be an optimal focal range of what is deemed to be satisfies an acceptable level of image quality.

Narrow and wide depths of field in an automotive setting.

Every lens will have a different capability of depth of field. Some lenses will generate a narrow depth of field, such as the first diagram above; the object’s furthest and nearest points to the camera may start to become blurred as these points begin to stray further from the optimal focal point. Not only is clarity lost at these points, but resolution and contrast will deteriorate as well, which of course all contribute to poor image quality.

Other lenses will be able to generate a wider depth of field, like in the second diagram, allowing for more flexibility in machine vision applications. This is particularly useful when trying to image objects with intricate geometric structures or large objects with considerable depth.

Helping You Make the Right Vision Decision

Stay tuned to this series of blogs as we continue to put the spotlight on optics, exploring aberrations, barrelling, distortion, MTF charts, and an ultimate guide to choosing the right lens.

Be sure to check our Lenses and Cameras pages for the best machine vision products on the market from industry leading brands such as Kowa, VST, Computar, Tamron, and Theia.