Stereo Vision for 3D Machine Vision Applications

4 MIN READ 02 December 2025By Allan Anderson

|

So far in this series of 3D machine vision blogs we have looked at an overview of the four main 3D technologies used in machine vision (Stereo Vision, Time of Flight, Structured Light, and 3D Profiling/Laser Triangulation), and explored 3D profiling for machine vision applications.

In this blog post we will cover stereo vision, exploring the technology in play and how it can solve problems in machine vision.

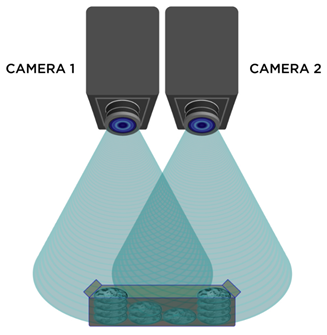

What is Stereo Vision?As with other types of 3D imaging technology, the goal with stereo vision is to solve the issue of depth perception – the core of all 3D problems. Stereo vision is a machine vision technique that can provide full field of view 3D measurements using two or more machine vision cameras. The foundation of stereo vision is similar to 3D perception in human vision and is based on triangulation of rays from multiple viewpoints.

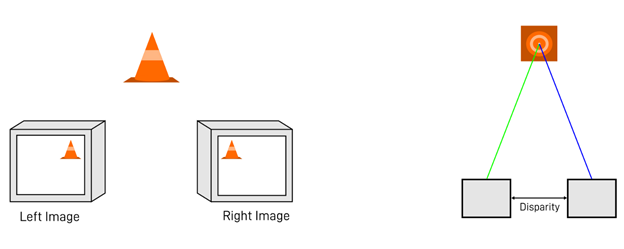

A stereo camera closely copies how our eyes work to give us accurate, real-time depth perception. It achieves this by using two sensors a set distance apart to triangulate similar pixels from both 2D planes. Each pixel in a digital camera image collects light that reaches the camera along a 3D ray. If a feature in the world can be identified as a pixel location in an image, we know that this feature lies on the 3D ray associated with that pixel. If we use multiple cameras, we can obtain multiple rays. Finding where these rays intersect tells us the 3D location of an object and its features.

Through basic triangulation of pixels and ray intersections, we can determine the 3D location of the traffic cone. The greater the disparity, the greater the angular offset from the object, and therefore the greater the 3D depth information. This will reduce problems such as optical occlusion, but will require careful calibration to succeed. Passive and Active Stereo VisionStereo vision in machine vision is considered a passive technology, as it does not require any artificial illumination to work. A stereo camera can simply be plugged in, calibrated, and deployed. Some stereo vision applications will, however, benefit from artificial illumination or a structured light source to aid visibility – in fact, some applications may rely on it to work. This is known as active stereo and has its pros and cons just as passive stereo does. Advantages and Disadvantages of Stereo VisionStereo vision can be CPU intensive when not hardware accelerated (FPGA, GPU, etc). This is due to algorithms like Semi-global matching (SGM), which performs stereo matching using 2 cameras and lens distortions compensation, that need to take place for stereo vision to work accurately and consistently over time. If you are finding that your embedded system or industrial computing unit is struggling, Teledyne's Bumblebee X stereo vision cameras remove the stress on a PC’s CPU, as most of the processing happens using a powerful FPGA – more on this further down. Passive stereo camera systems can be deployed without the need for lasers/LEDs and can generally perform effectively in most ambient lighting conditions. This being said, if the system is operating in low light, or scanning non-textured scenes or objects with textureless surfaces, then stereo vision tends to underperform as a 3D technology. Therefore, it is best to play to stereo vision’s strengths, as it will achieve excellent results when properly deployed in well-lit environments, used for applications such as bin-picking or autonomous cars. With no lasers or expensive lighting required, passive stereo vision can be much more affordable compared with 3D machine vision technologies. Furthermore, due to the lack of constraints on the range of motion on the target object like in 3D profiling technology, stereo vision can cope well with long distances and moving objects – something that other 3D imaging technologies tend to fall short on. Once calibrated, a stereo vision camera system can go on to detect depth in real time, and when combined with the right software to display the 3D image, users can benefit from colour depth mapping for added visibility.

Colour mapping helps to visually quantify distances on-screen. Stereo cameras are great for a wide range of applications. Autonomous vehicles, for example, have benefitted greatly from stereo vision systems. Combining this technology with a neural network can result in effective solutions for self-driving cars and other autonomous machines. Choosing the Right Stereo CameraTeledyne's Bumblebee X 3D stereo camera offer fantastic stereo imaging results at an affordable price point. Working at speeds up to 20 fps, the Teledyne Bumblebee X offers fast 3D measurement. In addition, Bumblebee X achieves an excellent resolution of up to 3 megapixels in camera and depth image. Bumblebee X combines 3D stereo camera and image processing in one device. By offloading processing to an FPGA the Bumblebee X handles the stress of tough processing, freeing your system’s CPU. Whether for static environments, or hard and critical real-time applications in dynamic environments, Teledyne's Bumblebee X 3D depth camera provides you with exactly the image and depth data you need for your machine vision application.

Teledyne Bumblebee X For further information on the above feel free to consult our informative e-book on 3D Imaging Techniques. Specifications for different 3D imaging solutions can be found in the data sheets of our cameras, available on our website to help you make the decision when choosing the optimal 3D machine vision camera model for your industrial application. |